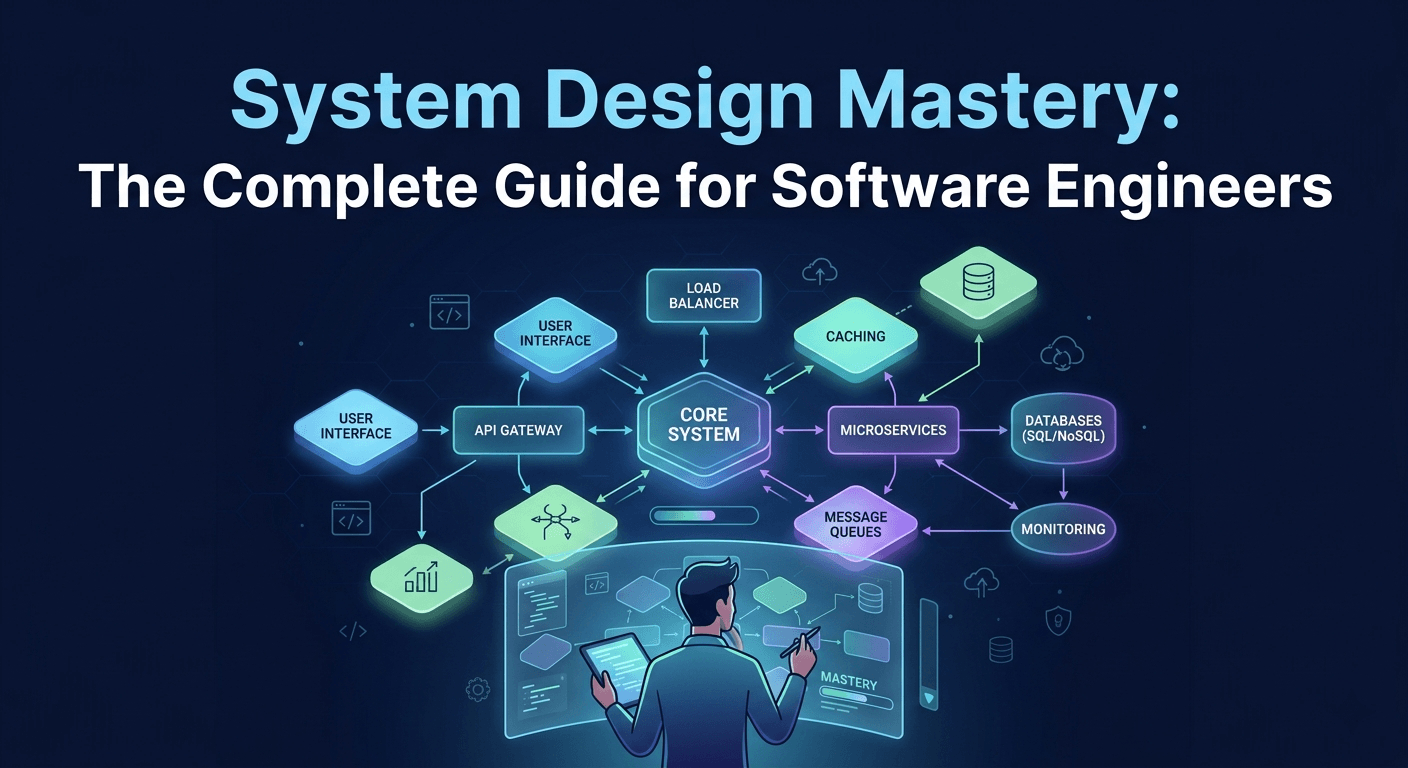

System Design Mastery: The Complete Guide for Software Engineers

In the fast-paced world of software engineering, the ability to build code is standard, but the ability to architect systems is what sets the masters apart. This comprehensive guide dives deep into the core principles of high-level system design, from understanding load balancing and caching strategies to navigating the complexities of microservices and database sharding. Whether you are preparing for a grueling technical interview or looking to scale your current application to support millions of users, this blog provides the roadmap you need.

System Design Mastery: The Complete Guide for Software Engineers

System design interviews are the biggest obstacle between mid-level developers and senior engineering roles at top tech companies. More importantly, system design skills separate engineers who can build features from those who can architect entire platforms.

Whether you're preparing for FAANG interviews or want to design better systems at your current job, this guide will teach you the frameworks, patterns, and thinking processes that senior engineers use to design scalable systems.

By the end, you'll understand how to design systems like Netflix, Uber, Twitter, and YouTube from scratch, and apply these patterns to solve real-world engineering challenges.

Prerequisites

To get the most from this guide, you should have:

- 2+ years of software development experience

- Understanding of basic data structures (arrays, trees, graphs, hash tables)

- Familiarity with databases and APIs

- Basic knowledge of how the web works (HTTP, DNS, servers)

- No distributed systems experience required

What Is System Design?

System design is the process of defining the architecture, components, modules, interfaces, and data flow for a system to satisfy specific requirements.

It answers questions like:

- How does Netflix serve videos to 200+ million users simultaneously?

- How does Google process billions of searches per day?

- How does Uber match riders with drivers in real-time?

- How does Instagram store and serve billions of photos?

Why System Design Matters

For Interviews:

- All senior+ engineering roles require system design rounds

- Often the deciding factor between candidates with similar coding skills

- Tests real-world engineering judgment, not just algorithms

For Your Career:

- Ability to design systems is what makes you a senior engineer

- Critical for technical leadership and architecture roles

- Helps you make better engineering decisions daily

- Essential for building scalable products

For Your Team:

- Poor design decisions cost companies millions in rewrites

- Good architecture scales smoothly as users grow

- Prevents technical debt and system failures

The System Design Framework

Use this framework for every system design question:

1. REQUIREMENTS (5 minutes)

↓

Clarify functional and non-functional requirements

Define scope and constraints

2. CAPACITY ESTIMATION (5 minutes)

↓

Calculate traffic, storage, bandwidth needs

Identify bottlenecks

3. HIGH-LEVEL DESIGN (10 minutes)

↓

Draw basic architecture diagram

Identify major components

4. DETAILED DESIGN (15 minutes)

↓

Deep dive into critical components

Discuss trade-offs

5. SCALE AND OPTIMIZE (10 minutes)

↓

Add caching, load balancing, replication

Handle failures

6. ADDITIONAL CONSIDERATIONS (5 minutes)

↓

Security, monitoring, deployment

Let's apply this framework to real examples.

Example 1: Design URL Shortener (Like Bit.ly)

Step 1: Requirements Clarification

Functional Requirements:

- Given a long URL, generate a short URL

- Short URL redirects to original long URL

- Optional: Custom short URLs

- Optional: Analytics (click count, locations)

Non-Functional Requirements:

- High availability (99.9% uptime)

- Low latency (< 100ms redirect time)

- Short URLs should be unpredictable

- System should scale to 100M URLs

Constraints:

- Short URL length: 6-7 characters

- Read-heavy system (100:1 read-write ratio)

- URLs don't expire

Step 2: Capacity Estimation

Traffic:

- 100M new URLs per month

- ~40 new URLs per second

- With 100:1 ratio: 4000 reads/second

Storage:

- Each URL entry: ~500 bytes (long URL + metadata)

- 100M URLs = 50 GB per month

- For 5 years: 50 GB × 60 = 3 TB

Bandwidth:

- Write: 40 requests/sec × 500 bytes = 20 KB/sec

- Read: 4000 requests/sec × 500 bytes = 2 MB/sec

Cache:

- 80-20 rule: 20% URLs generate 80% traffic

- Cache 20% of daily URLs: ~500 MB cache

Step 3: High-Level Design

┌─────────┐

│ Client │

└────┬────┘

│

▼

┌─────────────────┐

│ Load Balancer │

└────┬────────────┘

│

▼

┌─────────────────┐

│ API Servers │ (Create/Redirect)

└────┬────────────┘

│

├──────────┬─────────┐

▼ ▼ ▼

┌────────┐ ┌──────┐ ┌──────────┐

│ Cache │ │ DB │ │ Analytics│

└────────┘ └──────┘ └──────────┘

API Design:

POST /api/shorten

Request: { "longUrl": "https://example.com/very/long/url" }

Response: { "shortUrl": "https://short.ly/abc123" }

GET /abc123

Response: 302 Redirect to original URL

Step 4: Detailed Design

URL Encoding Strategy:

Option 1: Hash-based

import hashlib

def encode_url_hash(long_url):

# MD5 hash of URL

hash_value = hashlib.md5(long_url.encode()).hexdigest()

# Take first 6 characters

short_code = hash_value[:6]

# Problem: Collisions possible

return short_code

Option 2: Counter-based (Better)

import string

def encode_url_counter(counter):

"""

Convert counter to base62 (0-9, a-z, A-Z)

"""

chars = string.digits + string.ascii_lowercase + string.ascii_uppercase

base = len(chars) # 62

result = []

while counter > 0:

result.append(chars[counter % base])

counter //= base

return ''.join(reversed(result))

# Examples:

# 1 -> "1"

# 62 -> "10"

# 916132832 -> "abc123"

Database Schema:

-- URLs table

CREATE TABLE urls (

id BIGINT PRIMARY KEY AUTO_INCREMENT,

short_code VARCHAR(7) UNIQUE NOT NULL,

long_url TEXT NOT NULL,

user_id BIGINT,

created_at TIMESTAMP DEFAULT CURRENT_TIMESTAMP,

INDEX idx_short_code (short_code)

);

-- Analytics table

CREATE TABLE analytics (

id BIGINT PRIMARY KEY AUTO_INCREMENT,

short_code VARCHAR(7),

clicked_at TIMESTAMP,

ip_address VARCHAR(45),

user_agent TEXT,

country VARCHAR(2),

INDEX idx_short_code (short_code),

INDEX idx_clicked_at (clicked_at)

);

Implementation:

from flask import Flask, request, redirect

import redis

import mysql.connector

app = Flask(__name__)

# Redis for caching

cache = redis.Redis(host='localhost', port=6379)

# MySQL connection

db = mysql.connector.connect(

host="localhost",

user="user",

password="password",

database="urlshortener"

)

def base62_encode(num):

chars = "0123456789abcdefghijklmnopqrstuvwxyzABCDEFGHIJKLMNOPQRSTUVWXYZ"

if num == 0:

return chars[0]

result = []

while num:

result.append(chars[num % 62])

num //= 62

return ''.join(reversed(result))

@app.route('/api/shorten', methods=['POST'])

def shorten_url():

long_url = request.json['longUrl']

# Get next ID from database

cursor = db.cursor()

cursor.execute(

"INSERT INTO urls (long_url) VALUES (%s)",

(long_url,)

)

db.commit()

url_id = cursor.lastrowid

short_code = base62_encode(url_id)

# Update short_code

cursor.execute(

"UPDATE urls SET short_code = %s WHERE id = %s",

(short_code, url_id)

)

db.commit()

# Cache it

cache.setex(short_code, 86400, long_url) # 24h TTL

return {

'shortUrl': f'https://short.ly/{short_code}',

'longUrl': long_url

}

@app.route('/<short_code>')

def redirect_url(short_code):

# Check cache first

long_url = cache.get(short_code)

if not long_url:

# Cache miss - query database

cursor = db.cursor()

cursor.execute(

"SELECT long_url FROM urls WHERE short_code = %s",

(short_code,)

)

result = cursor.fetchone()

if not result:

return "URL not found", 404

long_url = result[0]

# Update cache

cache.setex(short_code, 86400, long_url)

# Log analytics (async would be better)

log_click(short_code, request)

return redirect(long_url.decode() if isinstance(long_url, bytes) else long_url)

def log_click(short_code, request):

cursor = db.cursor()

cursor.execute(

"""INSERT INTO analytics

(short_code, ip_address, user_agent)

VALUES (%s, %s, %s)""",

(short_code, request.remote_addr, request.user_agent.string)

)

db.commit()

Step 5: Scale and Optimize

Caching Strategy:

# Multi-level caching

class URLCache:

def __init__(self):

self.local_cache = {} # In-memory LRU

self.redis = redis.Redis()

def get(self, short_code):

# Level 1: Local memory (fastest)

if short_code in self.local_cache:

return self.local_cache[short_code]

# Level 2: Redis (fast)

long_url = self.redis.get(short_code)

if long_url:

self.local_cache[short_code] = long_url

return long_url

# Level 3: Database (slow)

long_url = self.db_get(short_code)

if long_url:

self.redis.setex(short_code, 86400, long_url)

self.local_cache[short_code] = long_url

return long_url

Database Sharding:

# Shard by short_code

def get_shard(short_code):

# Hash short_code and mod by number of shards

shard_count = 10

shard_id = hash(short_code) % shard_count

return shard_id

def get_db_connection(short_code):

shard_id = get_shard(short_code)

return db_connections[shard_id]

Rate Limiting:

from flask_limiter import Limiter

limiter = Limiter(

app,

key_func=lambda: request.remote_addr,

default_limits=["100 per hour"]

)

@app.route('/api/shorten', methods=['POST'])

@limiter.limit("10 per minute")

def shorten_url():

# ... implementation

Step 6: Additional Considerations

Security:

- Validate URLs to prevent malicious links

- Rate limiting to prevent abuse

- HTTPS for all traffic

- Check for spam/phishing URLs

Monitoring:

- Track cache hit rate

- Monitor database query time

- Alert on high error rates

- Track redirect latency

Deployment:

- Use CDN for static assets

- Deploy in multiple regions

- Blue-green deployment for zero downtime

- Auto-scaling based on traffic

Example 2: Design Instagram

Step 1: Requirements

Functional:

- Upload photos/videos

- Follow other users

- View feed of followed users' posts

- Like and comment on posts

- Search users

Non-Functional:

- 500M daily active users

- Low latency (feed loads in < 1s)

- High availability (99.99%)

- Eventually consistent (likes can have slight delay)

Step 2: Capacity Estimation

Traffic:

- 500M DAU

- Each user uploads 2 photos/day = 1B photos/day

- Each user views 50 posts/day = 25B views/day

- Write: 1B / 86400 = ~12K writes/sec

- Read: 25B / 86400 = ~290K reads/sec

Storage:

- Average photo size: 2 MB

- 1B photos/day × 2 MB = 2 PB/day

- For 5 years: 2 PB × 365 × 5 = 3.6 EB

Bandwidth:

- Upload: 12K/sec × 2 MB = 24 GB/sec

- Download: 290K/sec × 2 MB = 580 GB/sec

Step 3: High-Level Design

┌──────────────┐

│ CDN Network │

└──────┬───────┘

│

┌─────────┐ ┌──────▼───────┐

│ Client │──────▶│Load Balancer │

└─────────┘ └──────┬───────┘

│

┌────────────────┼────────────────┐

▼ ▼ ▼

┌──────────────┐ ┌──────────────┐ ┌──────────────┐

│ API Servers │ │ Image Server │ │ Feed Service │

└──────┬───────┘ └──────┬───────┘ └──────┬───────┘

│ │ │

▼ ▼ ▼

┌──────────────┐ ┌──────────────┐ ┌──────────────┐

│ PostgreSQL │ │ Object Store │ │ Redis │

│ (Metadata) │ │ (S3/GCS) │ │ (Cache) │

└──────────────┘ └──────────────┘ └──────────────┘

Step 4: Detailed Design

Database Schema:

-- Users table

CREATE TABLE users (

user_id BIGINT PRIMARY KEY,

username VARCHAR(50) UNIQUE,

email VARCHAR(255) UNIQUE,

profile_pic_url TEXT,

created_at TIMESTAMP

);

-- Posts table

CREATE TABLE posts (

post_id BIGINT PRIMARY KEY,

user_id BIGINT REFERENCES users(user_id),

image_url TEXT NOT NULL,

caption TEXT,

created_at TIMESTAMP,

INDEX idx_user_created (user_id, created_at)

);

-- Follows table

CREATE TABLE follows (

follower_id BIGINT REFERENCES users(user_id),

followee_id BIGINT REFERENCES users(user_id),

created_at TIMESTAMP,

PRIMARY KEY (follower_id, followee_id),

INDEX idx_follower (follower_id),

INDEX idx_followee (followee_id)

);

-- Likes table

CREATE TABLE likes (

post_id BIGINT REFERENCES posts(post_id),

user_id BIGINT REFERENCES users(user_id),

created_at TIMESTAMP,

PRIMARY KEY (post_id, user_id),

INDEX idx_post (post_id)

);

Image Upload Flow:

from flask import Flask, request

import boto3

import uuid

from PIL import Image

import io

app = Flask(__name__)

s3_client = boto3.client('s3')

@app.route('/api/upload', methods=['POST'])

def upload_photo():

file = request.files['photo']

user_id = request.form['user_id']

caption = request.form.get('caption', '')

# Generate unique ID

photo_id = str(uuid.uuid4())

# Process image

image = Image.open(file)

# Create multiple sizes

sizes = {

'original': (1080, 1080),

'medium': (640, 640),

'thumbnail': (150, 150)

}

urls = {}

for size_name, dimensions in sizes.items():

# Resize image

resized = image.copy()

resized.thumbnail(dimensions, Image.LANCZOS)

# Convert to bytes

buffer = io.BytesIO()

resized.save(buffer, format='JPEG', quality=85)

buffer.seek(0)

# Upload to S3

key = f'photos/{user_id}/{photo_id}_{size_name}.jpg'

s3_client.upload_fileobj(

buffer,

'instagram-photos',

key,

ExtraArgs={'ContentType': 'image/jpeg'}

)

urls[size_name] = f'https://cdn.instagram.com/{key}'

# Save metadata to database

cursor = db.cursor()

cursor.execute(

"""INSERT INTO posts (user_id, image_url, caption, created_at)

VALUES (%s, %s, %s, NOW())""",

(user_id, urls['original'], caption)

)

db.commit()

post_id = cursor.lastrowid

# Fan-out: Push to followers' feeds (async)

fanout_to_followers(user_id, post_id)

return {

'post_id': post_id,

'urls': urls

}

Feed Generation:

Two approaches:

1. Pull Model (Fetch on demand):

def get_feed_pull(user_id, page=1, page_size=20):

"""

Fetch posts from followed users on demand

Good for: Users with many followees

"""

offset = (page - 1) * page_size

cursor = db.cursor()

cursor.execute("""

SELECT p.post_id, p.user_id, p.image_url, p.caption, p.created_at

FROM posts p

JOIN follows f ON p.user_id = f.followee_id

WHERE f.follower_id = %s

ORDER BY p.created_at DESC

LIMIT %s OFFSET %s

""", (user_id, page_size, offset))

return cursor.fetchall()

2. Push Model (Pre-compute feeds):

import redis

redis_client = redis.Redis()

def fanout_to_followers(poster_id, post_id):

"""

When user posts, push to all followers' feeds

Good for: Users with few followers

"""

# Get all followers

cursor = db.cursor()

cursor.execute(

"SELECT follower_id FROM follows WHERE followee_id = %s",

(poster_id,)

)

followers = cursor.fetchall()

# Add post to each follower's feed (Redis sorted set)

for (follower_id,) in followers:

redis_client.zadd(

f'feed:{follower_id}',

{post_id: time.time()}

)

# Keep only latest 500 posts

redis_client.zremrangebyrank(f'feed:{follower_id}', 0, -501)

def get_feed_push(user_id, page=1, page_size=20):

"""

Fetch pre-computed feed from Redis

"""

start = (page - 1) * page_size

end = start + page_size - 1

# Get post IDs from Redis sorted set

post_ids = redis_client.zrevrange(

f'feed:{user_id}',

start,

end

)

# Fetch post details from database

cursor = db.cursor()

placeholders = ','.join(['%s'] * len(post_ids))

cursor.execute(f"""

SELECT post_id, user_id, image_url, caption, created_at

FROM posts

WHERE post_id IN ({placeholders})

""", post_ids)

return cursor.fetchall()

Hybrid Approach (Best):

def get_feed_hybrid(user_id):

"""

Push for active users with few followers

Pull for celebrities with millions of followers

"""

# Get follower count

cursor = db.cursor()

cursor.execute(

"SELECT COUNT(*) FROM follows WHERE followee_id = %s",

(user_id,)

)

follower_count = cursor.fetchone()[0]

# Threshold: 10K followers

if follower_count < 10000:

return get_feed_push(user_id)

else:

return get_feed_pull(user_id)

Step 5: Scale and Optimize

Database Sharding:

# Shard by user_id

def get_shard_id(user_id):

return user_id % 100 # 100 shards

# When querying

shard_id = get_shard_id(user_id)

db = get_db_connection(shard_id)

Caching Strategy:

from functools import lru_cache

import json

class FeedCache:

def __init__(self):

self.redis = redis.Redis()

def get_cached_feed(self, user_id, page):

key = f'feed:{user_id}:page:{page}'

cached = self.redis.get(key)

if cached:

return json.loads(cached)

# Cache miss - generate feed

feed = generate_feed(user_id, page)

# Cache for 5 minutes

self.redis.setex(key, 300, json.dumps(feed))

return feed

def invalidate_feed(self, user_id):

"""Invalidate when new post created"""

pattern = f'feed:{user_id}:page:*'

keys = self.redis.keys(pattern)

if keys:

self.redis.delete(*keys)

CDN for Images:

# CloudFront/Cloudflare configuration

CDN_DOMAINS = {

'us-east': 'https://us-east.cdn.instagram.com',

'us-west': 'https://us-west.cdn.instagram.com',

'europe': 'https://eu.cdn.instagram.com',

'asia': 'https://asia.cdn.instagram.com'

}

def get_image_url(image_key, user_location):

"""Serve from nearest CDN"""

cdn_domain = CDN_DOMAINS.get(user_location, CDN_DOMAINS['us-east'])

return f'{cdn_domain}/{image_key}'

Async Processing:

from celery import Celery

celery = Celery('tasks', broker='redis://localhost:6379')

@celery.task

def process_upload(file_path, user_id, caption):

"""Process upload asynchronously"""

# Resize images

# Upload to S3

# Generate thumbnails

# Update database

# Fanout to followers

pass

@app.route('/api/upload', methods=['POST'])

def upload_photo():

# Save file temporarily

temp_path = save_temp_file(request.files['photo'])

# Queue for processing

task = process_upload.delay(temp_path, user_id, caption)

return {'task_id': task.id, 'status': 'processing'}

Common Design Patterns

Pattern 1: Load Balancing

Round Robin:

class RoundRobinBalancer:

def __init__(self, servers):

self.servers = servers

self.index = 0

def get_server(self):

server = self.servers[self.index]

self.index = (self.index + 1) % len(self.servers)

return server

Consistent Hashing:

import hashlib

class ConsistentHash:

def __init__(self, nodes, virtual_nodes=150):

self.virtual_nodes = virtual_nodes

self.ring = {}

for node in nodes:

self.add_node(node)

def add_node(self, node):

for i in range(self.virtual_nodes):

key = f'{node}:{i}'

hash_value = int(hashlib.md5(key.encode()).hexdigest(), 16)

self.ring[hash_value] = node

def get_node(self, key):

if not self.ring:

return None

hash_value = int(hashlib.md5(key.encode()).hexdigest(), 16)

# Find closest node clockwise

for ring_key in sorted(self.ring.keys()):

if hash_value <= ring_key:

return self.ring[ring_key]

# Wrap around to first node

return self.ring[min(self.ring.keys())]

Pattern 2: Caching

Cache-Aside Pattern:

def get_user(user_id):

# Try cache first

user = cache.get(f'user:{user_id}')

if user:

return user

# Cache miss - fetch from database

user = db.query(f'SELECT * FROM users WHERE id = {user_id}')

# Update cache

cache.set(f'user:{user_id}', user, ttl=3600)

return user

Write-Through Cache:

def update_user(user_id, data):

# Update database

db.update(f'UPDATE users SET ... WHERE id = {user_id}')

# Update cache simultaneously

cache.set(f'user:{user_id}', data, ttl=3600)

Write-Behind Cache:

def update_user(user_id, data):

# Update cache immediately

cache.set(f'user:{user_id}', data, ttl=3600)

# Queue database update asynchronously

queue.push('db_updates', {'user_id': user_id, 'data': data})

Pattern 3: Rate Limiting

Token Bucket Algorithm:

import time

class TokenBucket:

def __init__(self, capacity, refill_rate):

self.capacity = capacity

self.tokens = capacity

self.refill_rate = refill_rate # tokens per second

self.last_refill = time.time()

def allow_request(self):

# Refill tokens

now = time.time()

elapsed = now - self.last_refill

self.tokens = min(

self.capacity,

self.tokens + elapsed * self.refill_rate

)

self.last_refill = now

# Check if request allowed

if self.tokens >= 1:

self.tokens -= 1

return True

return False

# Usage

bucket = TokenBucket(capacity=100, refill_rate=10) # 10 req/sec

@app.route('/api/endpoint')

def endpoint():

if not bucket.allow_request():

return {'error': 'Rate limit exceeded'}, 429

# Process request

return {'success': True}

Sliding Window:

import redis

import time

class SlidingWindowRateLimiter:

def __init__(self, redis_client, max_requests, window_seconds):

self.redis = redis_client

self.max_requests = max_requests

self.window = window_seconds

def is_allowed(self, user_id):

key = f'rate_limit:{user_id}'

now = time.time()

window_start = now - self.window

# Remove old entries

self.redis.zremrangebyscore(key, 0, window_start)

# Count requests in window

request_count = self.redis.zcard(key)

if request_count < self.max_requests:

# Add current request

self.redis.zadd(key, {now: now})

self.redis.expire(key, self.window)

return True

return False

Pattern 4: Database Scaling

Read Replicas:

class DatabasePool:

def __init__(self):

self.master = connect_to_db('master')

self.replicas = [

connect_to_db('replica1'),

connect_to_db('replica2'),

connect_to_db('replica3')

]

self.replica_index = 0

def write(self, query):

"""All writes go to master"""

return self.master.execute(query)

def read(self, query):

"""Reads distributed across replicas"""

replica = self.replicas[self.replica_index]

self.replica_index = (self.replica_index + 1) % len(self.replicas)

return replica.execute(query)

Database Sharding:

class ShardedDatabase:

def __init__(self, shards):

self.shards = shards

self.shard_count = len(shards)

def get_shard(self, key):

"""Determine which shard contains the key"""

shard_id = hash(key) % self.shard_count

return self.shards[shard_id]

def insert(self, key, value):

shard = self.get_shard(key)

shard.execute(f"INSERT INTO table VALUES ({key}, {value})")

def get(self, key):

shard = self.get_shard(key)

return shard.execute(f"SELECT * FROM table WHERE key = {key}")

Trade-offs in System Design

Every design decision involves trade-offs. Here are the most important ones:

1. Consistency vs Availability (CAP Theorem)

Strong Consistency Eventual Consistency

↓ ↓

All nodes see same Nodes may temporarily

data at same time have different data

↓ ↓

Lower availability Higher availability

Slower writes Faster writes

↓ ↓

Use for: Banking, Use for: Social media,

inventory, bookings likes, views, comments

Example:

# Strong consistency (two-phase commit)

def transfer_money(from_account, to_account, amount):

# Transaction ensures both succeed or both fail

with db.transaction():

db.deduct(from_account, amount)

db.add(to_account, amount)

# If any fails, both rollback

# Eventual consistency

def like_post(post_id, user_id):

# Update multiple caches independently

cache1.increment(f'likes:{post_id}')

cache2.add_to_set(f'likers:{post_id}', user_id)

# May be temporarily inconsistent

queue.push('sync_likes', {'post_id': post_id, 'user_id': user_id})

2. SQL vs NoSQL

SQL (PostgreSQL, MySQL) NoSQL (MongoDB, Cassandra)

↓ ↓

Structured schema Flexible schema

ACID transactions Eventually consistent

Complex queries Simple queries

Vertical scaling Horizontal scaling

↓ ↓

Use for: Financial, Use for: Logs, analytics,

user data, orders time-series, sessions

3. Synchronous vs Asynchronous Processing

# Synchronous (blocking)

@app.route('/upload')

def upload_file():

file = request.files['file']

# User waits for all of this

resize_image(file) # 2 seconds

upload_to_s3(file) # 3 seconds

generate_thumbnail(file) # 2 seconds

update_database(file) # 1 second

return {'success': True} # 8 seconds total

# Asynchronous (non-blocking)

@app.route('/upload')

def upload_file():

file = request.files['file']

# Queue for background processing

task_id = queue.enqueue(process_file, file)

return {'task_id': task_id} # Returns immediately

# Background worker

def process_file(file):

resize_image(file)

upload_to_s3(file)

generate_thumbnail(file)

update_database(file)

4. Normalization vs Denormalization

-- Normalized (avoid duplication)

CREATE TABLE users (

id INT PRIMARY KEY,

username VARCHAR(50)

);

CREATE TABLE posts (

id INT PRIMARY KEY,

user_id INT REFERENCES users(id),

content TEXT

);

-- Need JOIN to get username with post

SELECT p.content, u.username

FROM posts p

JOIN users u ON p.user_id = u.id;

-- Denormalized (duplicate data for speed)

CREATE TABLE posts (

id INT PRIMARY KEY,

user_id INT,

username VARCHAR(50), -- Duplicated!

content TEXT

);

-- No JOIN needed

SELECT content, username FROM posts;

Interview Tips

1. Start with Clarifying Questions

Don't jump into design immediately. Ask:

Functional Requirements:

- What features are most important?

- What's the expected user flow?

- Are there any features we can defer?

Scale Requirements:

- How many users?

- How many requests per second?

- How much data storage needed?

- Read vs write ratio?

Performance Requirements:

- What's acceptable latency?

- Uptime requirements?

- Consistency vs availability priority?

2. Think Out Loud

Interviewers want to understand your thought process:

❌ Bad: "I'll use Redis for caching"

✅ Good: "We have a read-heavy workload with 100:1 read-write

ratio, so caching will significantly reduce database load.

Redis is a good fit because it's in-memory, supports TTL,

and can handle millions of ops/sec. We could also consider

Memcached, but Redis gives us more data structures if we

need them later."

3. Discuss Trade-offs

Every decision has pros and cons:

"We could use a microservices architecture which gives us:

+ Independent scaling of components

+ Team autonomy

+ Technology flexibility

But also:

- Increased operational complexity

- Network latency between services

- Distributed system challenges

Given our team size and requirements, I'd recommend

starting with a monolith and splitting into services

as we identify bottlenecks."

4. Draw Diagrams

Visual communication is crucial:

Components:

┌─────────┐ = Service/Server

│ │

├─────────┤ = Database

│ │

[ ] = Queue

──▶ = Data flow

┄┄▶ = Async flow

5. Know Your Numbers

Memorize these latencies:

L1 cache: 0.5 ns

L2 cache: 7 ns

RAM: 100 ns

SSD: 150 μs

Network (same DC): 500 μs

HDD: 10 ms

Network (different DC): 150 ms

1 million requests/day = ~12 requests/second

1 billion requests/day = ~12K requests/second

Practice Problems

Beginner Level

-

Design a Pastebin (Like pastebin.com)

- Store and retrieve text snippets

- Generate unique URLs

- Set expiration times

-

Design a Key-Value Store (Like Redis)

- GET/SET operations

- TTL support

- Persistence

-

Design a Web Crawler

- Crawl websites systematically

- Avoid duplicates

- Respect robots.txt

Intermediate Level

-

Design Twitter

- Post tweets

- Follow users

- View timeline

- Trending topics

-

Design Uber

- Match riders with drivers

- Real-time location tracking

- Fare calculation

- ETA estimation

-

Design Netflix

- Video streaming

- Recommendations

- Search

- Continue watching

Advanced Level

-

Design WhatsApp

- Real-time messaging

- Group chats

- Message delivery confirmation

- End-to-end encryption

-

Design Google Search

- Web indexing

- Query processing

- Ranking algorithm

- Auto-complete

-

Design YouTube

- Video upload and processing

- Streaming at scale

- Comments and recommendations

- Live streaming

Resources for Learning

Books

- "Designing Data-Intensive Applications" by Martin Kleppmann (Best overall)

- "System Design Interview" by Alex Xu (Interview-focused)

- "Database Internals" by Alex Petrov (Deep dive)

Online Courses

- Grokking the System Design Interview (educative.io)

- System Design Primer (GitHub - free)

- Scalability Lectures (Harvard CS75)

Practice Platforms

- Pramp - Mock interviews with peers

- Interviewing.io - Practice with engineers

- LeetCode - System design section

YouTube Channels

- Gaurav Sen - System design concepts

- Tech Dummies - Design deep dives

- ByteByteGo - Visual explanations

Blogs

- High Scalability (highscalability.com)

- Engineering blogs: Netflix, Uber, Airbnb tech blogs

- Martin Fowler's blog (martinfowler.com)

Conclusion

System design is not about memorizing solutions—it's about understanding principles and applying them to solve problems. The best system designers:

- Ask clarifying questions before designing

- Understand trade-offs and explain them clearly

- Start simple and iterate

- Consider non-functional requirements (scale, reliability, performance)

- Think about operations and monitoring

- Know when to optimize and when "good enough" is fine

Key Takeaways

- There's no perfect design - Every solution has trade-offs

- Start simple - Add complexity only when needed

- Think in terms of components - Break problems into manageable pieces

- Consider the entire lifecycle - Deployment, monitoring, maintenance matter

- Practice regularly - System design is a skill that improves with practice

Next Steps

- Study one system deeply this week - Pick URL shortener or Pastebin

- Design something you use daily - How would you build Twitter, Instagram?

- Join a study group - Discuss designs with peers

- Read engineering blogs - Learn from production systems

- Do mock interviews - Practice explaining your designs

Remember: Companies hire you to build systems that solve real problems for real users. System design skills make you valuable because you can think beyond individual features and see the bigger picture.

Start practicing today. Pick a system, work through the framework, and you'll be surprised how quickly you improve.